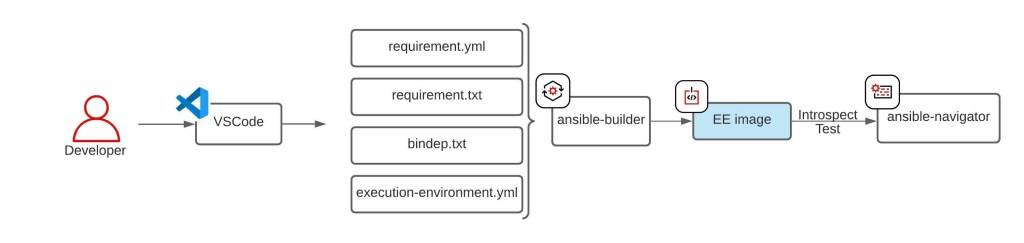

With ansible 2.x, ansible requires an execution environment to run playbooks.

This was to increase the portability of ansible development. However, with Apple’s new M1/M2 silicone, it has made things rather tricky.

Short Answer:

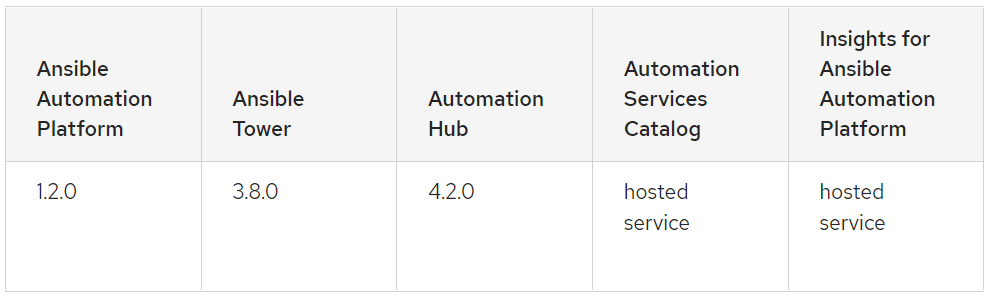

YOU CAN NOT CREAD RED HAT SUPPORTED ANSIBLE EXECUTION ENGINE in APPLE SILICONE [M1]

Short REASON:

If you decide to use Red Hat’s certified EE minimal images, right at the end, it checks for the validity of the HOST machine.

https://access.redhat.com/solutions/4643601

Since I am using a MAC, it won’t find the valid subscription, therefore it will fail with below error;

....

#20 3.932 + /usr/bin/microdnf install -y --nodocs --setopt=install_weak_deps=0 --setopt=rhel-8-for-x86_64-appstream-rpms.excludepkgs=ansible-core subversion

#20 4.064

#20 4.064 (microdnf:61): librhsm-WARNING **: 23:54:03.115: Found 0 entitlement certificates

#20 4.075

#20 4.075 (microdnf:61): librhsm-WARNING **: 23:54:03.128: Found 0 entitlement certificates

...

executor failed running [/bin/sh -c /output/install-from-bindep && rm -rf /output/wheels]: exit code: 1

Longer answer:

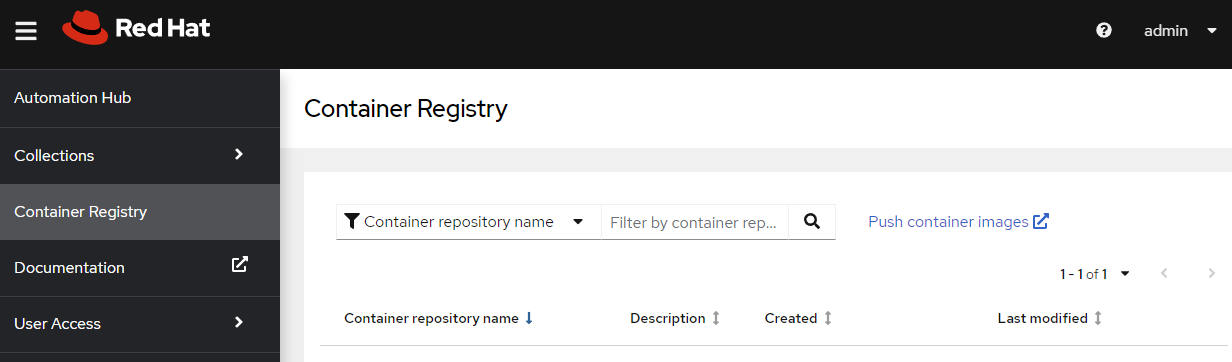

YES, you can still create an EE using the community images.

Prerequisites:

- Brew

- Ansible

- Ansible-builder

- Ansible-navigator

- docker desktop

- an environment variable “DOCKER_DEFAULT_PLATFORM=linux/amd64”

Core issues:

- Ansible Execution Engine container images are built for x86_64/amd64 whereas MAC M1/M2 are based on arm64

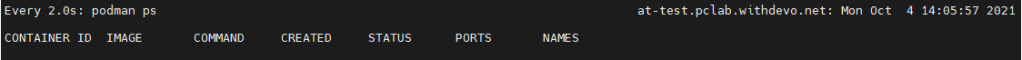

- ansible-builder’s default container runtime “podman” is not available in MAC M1

Solutions:

- Use Docker desktop to emulate x86_64 or AMD64

- Use an environment variable to set the docker’s default platform to be ’emulated” x86_64/amd64

- use “–container-runtime docker” option in ansible-builder to switch from the default “podman” to “docker” as the container runtime.

Command to use:

% ansible-builder build --container-runtime docker -v3 -t <EE TAG NAME> <CONTEXT NAME>

Below is the outcome from my command:

Ansible Builder is building your execution environment image. Tags: <EE TAG NANE>

File context/_build/requirements.yml is already up-to-date.

File context/_build/requirements.txt is already up-to-date.

File context/_build/bindep.txt is already up-to-date.

Rewriting Containerfile to capture collection requirements

....

#21 0.362 lrwxrwxrwx 1 root root 12 Jan 12 09:05 yum.conf -> dnf/dnf.conf

#21 0.362 drwxr-xr-x 1 root root 4096 Apr 28 2022 yum.repos.d

#21 DONE 0.4s

#22 exporting to image

#22 sha256:e8c613e07b0b7ff33893b694f7759a10d42e180f2b4dc349fb57dc6b71dcab00

#22 exporting layers

#22 exporting layers 1.3s done

#22 writing image sha256:86e1fe66e05724035fbcf2ecfb3492e70581fd04027ebbe687cad99a00c25d2b done

#22 naming to docker.io/library/<EE TAG> done

#22 DONE 1.3sComplete! The build context can be found at: /Users/david.joo/Documents/ansible/playpan/context

% docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

<EE TAG NAME> latest 86e1fe66e057 15 minutes ago 1.22GB